Documentos de Académico

Documentos de Profesional

Documentos de Cultura

A2 VSphere Architecture Design

Cargado por

Lenin Kumar0 calificaciones0% encontró este documento útil (0 votos)

128 vistas79 páginasVMware vSphere Plan and Design Services Architecture design for Customer Prepared by Jane Q. Consultant, Sr. Consultant VMware Professional Services (c) 2010 VMware, Inc. All rights reserved. Your actual deliverable for your customer will vary depending on the engagement scope, situation, environment, and requirements.

Descripción original:

Derechos de autor

© © All Rights Reserved

Formatos disponibles

DOCX, PDF, TXT o lea en línea desde Scribd

Compartir este documento

Compartir o incrustar documentos

¿Le pareció útil este documento?

¿Este contenido es inapropiado?

Denunciar este documentoVMware vSphere Plan and Design Services Architecture design for Customer Prepared by Jane Q. Consultant, Sr. Consultant VMware Professional Services (c) 2010 VMware, Inc. All rights reserved. Your actual deliverable for your customer will vary depending on the engagement scope, situation, environment, and requirements.

Copyright:

© All Rights Reserved

Formatos disponibles

Descargue como DOCX, PDF, TXT o lea en línea desde Scribd

0 calificaciones0% encontró este documento útil (0 votos)

128 vistas79 páginasA2 VSphere Architecture Design

Cargado por

Lenin KumarVMware vSphere Plan and Design Services Architecture design for Customer Prepared by Jane Q. Consultant, Sr. Consultant VMware Professional Services (c) 2010 VMware, Inc. All rights reserved. Your actual deliverable for your customer will vary depending on the engagement scope, situation, environment, and requirements.

Copyright:

© All Rights Reserved

Formatos disponibles

Descargue como DOCX, PDF, TXT o lea en línea desde Scribd

Está en la página 1de 79

VMware and Customer Confidential

VMware vSphere Plan and Design Services

Architecture Design

for

Customer

Prepared by

Jane Q. Consultant, Sr. Consultant

VMware Professional Services

consultant@vmware.com

VMware vSphere Plan and Design Services

Architecture Design

2010 VMware, Inc. All rights reserved.

Page 2 of 79

Revision History

Date Rev Author Comments Reviewers

08 December 2010 v2.2 Pat Carri Pubs

Edits/Formatting

Jeff Friedman

07 December 2010 v2.1 Andrew Hald Introduced vCAF,

reorganized

document flow

John Arrasjid,

Michael Mannarino,

Wade Holmes,

Kaushik Banerjee

04 November 2010 v2.0 Jeff Friedman vSphere 4.1 Andrew Hald

16 June 2009 v1.0 Mark Ewert,

Kingsley Turner,

Ken Polakowski

Pang Chen

DELETE THE FOLLOWING HIGHLIGHTED TEXT AFTER YOU READ IT

This is representative sample deliverable of a Plan and Design for VMware vSphere engagement

for use when building, rebuilding, or expanding a specific VMware vSphere design. Your actual

deliverable for your customer will vary depending on the engagement scope, situation,

environment, and requirements. You will need to update this document based upon your specific

customer.

2010 VMware, Inc. All rights reserved. This product is protected by U.S. and international

copyright and intellectual property laws. This product is covered by one or more patents listed at

http://www.vmware.com/download/patents.html.

VMware is a registered trademark or trademark of VMware, Inc. in the United States and/or other

jurisdictions. All other marks and names mentioned herein may be trademarks of their respective

companies.

VMware, Inc

3401 Hillview Ave

Palo Alto, CA 94304

www.vmware.com

VMware vSphere Plan and Design Services

Architecture Design

2010 VMware, Inc. All rights reserved. VMware is a registered trademark of VMware, Inc.

Page 3 of 79

Design Subject Matter Experts

The people listed in the following table provided key input into this design.

Name Email Address Role/Comments

VMware vSphere Plan and Design Services

Architecture Design

2010 VMware, Inc. All rights reserved.

Page 4 of 79

Contents

1. Purpose and Overview ...................................................................... 9

1.1 Executive Summary ....................................................................................................... 9

1.2 Business Background .................................................................................................... 9

1.3 The VMware Consulting and Architecture Framework ................................................ 10

2. Conceptual Design Overview .......................................................... 13

2.1 Customer Requirements.............................................................................................. 13

2.2 Design Assumptions .................................................................................................... 13

2.3 Design Constraints ...................................................................................................... 14

2.4 Use Cases ................................................................................................................... 14

3. vSphere Datacenter Design ............................................................ 15

3.1 vSphere Datacenter Logical Design ............................................................................ 15

3.2 vSphere Clusters ......................................................................................................... 16

3.3 Microsoft Cluster Service in an HA/DRS Environment ................................................ 20

3.4 VMware FT .................................................................................................................. 20

4. VMware ESX/ESXi Host Design ...................................................... 21

4.1 Compute Layer Logical Design ................................................................................... 21

4.2 Host Platform ............................................................................................................... 22

4.3 Host Physical Design Specifications ........................................................................... 23

5. vSphere Network Architecture ......................................................... 24

5.1 Network Layer Logical Design ..................................................................................... 24

5.2 Network vSwitch Design .............................................................................................. 25

5.3 Network Physical Design Specifications ..................................................................... 29

5.4 Network I/O Control ..................................................................................................... 30

6. vSphere Shared Storage Architecture ............................................. 32

6.1 Storage Layer Logical Design ..................................................................................... 32

6.2 Shared Storage Platform ............................................................................................. 33

6.3 Shared Storage Design ............................................................................................... 34

6.4 Shared Storage Physical Design Specifications ......................................................... 36

6.5 Storage I/O Control ...................................................................................................... 36

7. VMware vCenter Server System Design ......................................... 38

7.1 Management Layer Logical Design ............................................................................. 38

7.2 vCenter Server Platform .............................................................................................. 39

VMware vSphere Plan and Design Services

Architecture Design

2010 VMware, Inc. All rights reserved.

Page 5 of 79

7.3 vCenter Server Physical Design Specifications .......................................................... 40

7.4 vCenter Server and Update Manager Databases ....................................................... 40

7.5 Licenses ...................................................................................................................... 43

8. vSphere Infrastructure Security ....................................................... 44

8.1 Overview ...................................................................................................................... 44

8.2 Host Security ............................................................................................................... 44

8.3 vCenter and Virtual Machine Security ......................................................................... 44

8.4 vSphere Port Requirements ........................................................................................ 45

8.5 Lockdown Mode and Troubleshooting Services ......................................................... 45

9. vSphere Infrastructure Monitoring ................................................... 46

9.1 Overview ...................................................................................................................... 46

9.2 Server, Network, and SAN Infrastructure Monitoring .................................................. 46

9.3 vSphere Monitoring ..................................................................................................... 46

9.4 Virtual Machine Monitoring .......................................................................................... 47

10. vSphere Infrastructure Patch/Version Management ...................... 48

10.1 Overview ...................................................................................................................... 48

10.2 vCenter Update Manager ............................................................................................ 48

10.3 vCenter Server and vSphere Client Updates .............................................................. 50

11. Backup/Restore Considerations .................................................... 51

11.1 Hosts............................................................................................................................ 51

11.2 Virtual Machines .......................................................................................................... 51

12. Design Assumptions ...................................................................... 52

12.1 Hardware ..................................................................................................................... 52

12.2 External Dependencies ............................................................................................... 53

13. Reference Documents ................................................................... 54

13.1 Supplemental White Papers and Presentations .......................................................... 54

13.2 Supplemental KB Articles ............................................................................................ 56

13.3 Supplemental KB Articles ............................................................................................ 57

Appendix A ESX/ESXi Host Estimation .............................................. 58

Appendix B ESX/ESXi Host PCI Configuration ................................... 62

Appendix C Hardware BIOS Settings ................................................. 63

Appendix D Network Specifications .................................................... 64

Appendix E Storage Volume Specifications ........................................ 65

VMware vSphere Plan and Design Services

Architecture Design

2010 VMware, Inc. All rights reserved.

Page 6 of 79

Appendix F LUN Sizing Recommendations ........................................ 66

Appendix G Security Configuration ..................................................... 68

Appendix H Port Requirements .......................................................... 70

Appendix I Monitoring Configuration ................................................... 74

Appendix J Naming Conventions ........................................................ 77

Appendix K Design Log ...................................................................... 79

VMware vSphere Plan and Design Services

Architecture Design

2010 VMware, Inc. All rights reserved.

Page 7 of 79

List of Figures

Figure 1. Datacenter Logical Design ............................................................................................. 17

Figure 2. Network Switch Design .................................................................................................. 27

Figure 3. SAN Diagram ................................................................................................................. 34

List of Tables

Table 1. Infrastructure Design Qualities ........................................................................................ 11

Table 2. Infrastructure Design Quality Ratings .............................................................................. 12

Table 3. Customer Requirements.................................................................................................. 13

Table 4. Design Assumptions ........................................................................................................ 13

Table 5. Design Constraints .......................................................................................................... 14

Table 6. Continuous Availability or High Availability ...................................................................... 16

Table 7. Total Number of Hosts and Clusters Required................................................................ 16

Table 8. VMware HA Cluster Configuration .................................................................................. 18

Table 9. Option 1 Name or Option 2 Name ................................................................................... 21

Table 10. VMware ESX/ESXi Specifications ................................................................................. 22

Table 11. VMware ESX/ESXi Host Hardware Physical Design Specifications ............................. 23

Table 12. Option 1 Name or Option 2 Name ................................................................................. 24

Table 13. Proposed Virtual Switches Per Host ............................................................................. 25

Table 14. vDS Configuration Settings ........................................................................................... 28

Table 15. vDS Security Settings .................................................................................................... 29

Table 16. vSwitches by Physical/Virtual NIC, Port and Function .................................................. 29

Table 17. Virtual Switch Port Groups and VLANs ......................................................................... 30

Table 18. Virtual Switch Port Groups and VLANs ......................................................................... 30

Table 19. Option 1 Name or Option 2 Name ................................................................................. 32

Table 20. Shared Storage Logical Design Specifications ............................................................. 33

Table 21. Shared Storage Physical Design Specifications ........................................................... 36

Table 22. Storage I/O Enabled ...................................................................................................... 36

Table 23. Disk Shares and Limits .................................................................................................. 37

Table 24. Option 1 Name or Option 2 Name ................................................................................. 38

Table 25. vCenter Server Logical Design Specifications .............................................................. 39

Table 26. vCenter Server System Hardware Physical Design Specifications .............................. 40

Table 27. vCenter Server and Update Manager Databases Design ............................................. 40

Table 28. vCenter Server and Update Manager Database Names .............................................. 41

VMware vSphere Plan and Design Services

Architecture Design

2010 VMware, Inc. All rights reserved.

Page 8 of 79

Table 29. SQL Server Database Accounts ................................................................................... 42

Table 30. ODBC System DSN ....................................................................................................... 42

Table 31. Lockdown Mode Configurations .................................................................................... 45

Table 32. Estimated Update Manager Storage Requirements ..................................................... 49

Table 33. vCenter Update Manager Specifications ....................................................................... 49

Table 34. Sources of Technical Assumptions for this Design ....................................................... 52

Table 35. VMware Infrastructure External Dependencies ............................................................. 53

Table 36. CPU Resource Requirements ....................................................................................... 58

Table 37. RAM Resource Requirements ....................................................................................... 58

Table 38. Proposed ESX/ESXi Host CPU Logical Design Specifications ..................................... 59

Table 39. Proposed ESX/ESXi Host RAM Logical Design Specifications .................................... 59

Table 40. VMware vSphere Consolidation Ratios ......................................................................... 61

Table 41. ESX/ESXi Host PCIe Slot Assignments ........................................................................ 62

Table 42. ESX/ESXi Hostnames and IP Addresses...................................................................... 64

Table 43. VMFS Volumes .............................................................................................................. 65

Table 44. NFS Volumes ................................................................................................................ 65

Table 45. vSphere Roles and Permissions ................................................................................... 68

Table 46. vCenter Virtual Machine and Template Inventory Folders to be used to Secure VMs . 69

Table 47. ESX/ESXi Port Requirements ....................................................................................... 70

Table 48. vCenter Server Port Requirements ............................................................................... 71

Table 49. vCenter Converter Standalone Port Requirements ....................................................... 72

Table 50. vCenter Update Manager Port Requirements ............................................................... 73

Table 51. SNMP Receiver Configuration ...................................................................................... 74

Table 52. vCenter SMTP Settings ................................................................................................. 74

Table 53. Physical to Virtual Windows Performance Monitor (Perfmon) Counters ....................... 74

Table 54. Modifications to Default Alarm Trigger Types ............................................................... 76

Table 55. Design Log .................................................................................................................... 79

VMware vSphere Plan and Design Services

Architecture Design

2010 VMware, Inc. All rights reserved.

Page 9 of 79

1. Purpose and Overview

1.1 Executive Summary

This VMware vSphere architecture design was developed to support a virtualization project to

consolidate 1,000 existing physical servers. The required infrastructure being defined here will be

used not only for the first attempt at virtualization, but also as a foundation for follow-on projects

to completely virtualize the enterprise and to prepare it for the journey to Cloud Computing.

Virtualization is being adopted to slash power and cooling costs, reduce the need for expensive

datacenter expansion, increase operational efficiency, and capitalize on the higher availability and

increased flexibility that comes with running virtual workloads. The goal is for IT to be well-

positioned to respond rapidly to ever-changing business needs.

This document details the recommended vSphere foundation architecture to implement based on

a combination of VMware best practices and specific business requirements and goals. The

document provides both logical and physical design considerations encompassing all VMware

vSphere-related infrastructure components, including requirements and specifications for virtual

machines and hosts, networking and storage, and management. After this initial, foundation

architecture is successfully implemented, the architecture can be rolled out to other locations to

support a virtualization-first initiative, meaning that all future x86 workloads will be provisioned on

virtual machines by default.

1.2 Business Background

Company and project background:

Multinational manufacturing corporation with large retail sales and finance divisions

Vision for the future of IT is to use virtualization as a key enabling technology

This first foundation infrastructure to be located at a primary U.S. datacenter in Burlington,

Massachusetts

Initial consolidation project targets 1,000 mission-critical x86 servers

VMware vSphere Plan and Design Services

Architecture Design

2010 VMware, Inc. All rights reserved.

Page 10 of 79

1.3 The VMware Consulting and Architecture Framework

The VMware Consulting and Architecture Framework (vCAF) is a set of tools for delivering all

VMware consulting engagements in a standardized way. The framework guides the design

process and creates the architecture design. vCAF is executed in the following phases:

Discovery

o Understand the customers business requirements and objectives for the project.

o Capture the business and technical requirements, assumptions, and constraints for the

project.

o Perform or secure a current state analysis of customers existing VMware vSphere

environment.

Development

o Conduct design workshops and interviews with the following subject matter experts:

application, business continuity (BC) and DR, environment, storage, networking, security,

server administration, and operations. The goal of these workshops is to transform the

business and technical requirements into a logical design that is scalable and flexible.

o Discuss design options with tradeoffs and benefits. Compare the design choices with

their impact against key infrastructure qualities as defined by vCAF.

Execution

o Build the vSphere infrastructure according to the physical design specifications.

o Execute verification procedures to confirm operational and technical success.

Review

o Identify next steps.

o Re-calibrate enterprise goals and identify any new or modified objectives.

o Match enterprise goals to future engagement of professional services.

VMware vSphere Plan and Design Services

Architecture Design

2010 VMware, Inc. All rights reserved.

Page 11 of 79

The infrastructure qualities shown in the following table are used to categorize requirements and

design decisions as well as assess infrastructure maturity.

Table 1. Infrastructure Design Qualities

Design Quality Description

Availability Indicates the effect of a design choice on the ability of a technology

and the related infrastructure to achieve highly available operation.

Key metrics: % uptime

Manageability Indicates the effect of a design choice on the flexibility of an

environment and the ease of operations in its management. Sub-

qualities may include scalability and flexibility. Higher ratios are

considered better indicators.

Key Metrics:

Servers per administrator

Clients per IT personnel

Time to deploy new technology

Performance Indicates the effect of a design choice on the performance of the

environment. This does not necessarily reflect the impact on other

technologies within the infrastructure.

Key Metrics:

Response time

Throughput

Recoverability Indicates the effect of a design choice on the ability to recover from

an unexpected incident which affects the availability of an

environment.

Key Metrics:

RTO Recovery Time Objective

RPO Recovery Point Objective

Security Indicates the effect of a design choice to have a positive or

negative impact on overall infrastructure security. Can also indicate

whether a quality has an impact on the ability of a business to

demonstrate or achieve compliance with certain regulatory policies.

Key Metrics:

Unauthorized access prevention

Data integrity and confidentiality

Forensic capabilities in case of a compromise

VMware vSphere Plan and Design Services

Architecture Design

2010 VMware, Inc. All rights reserved.

Page 12 of 79

All qualities are rated as shown in the following table.

Table 2. Infrastructure Design Quality Ratings

Symbol Definition

Positive effect on the design quality

o No effect on the design quality or there is no comparison basis

Negative effect on the design quality

This document captures the design decisions made for the solution to meet customer

requirements. In some cases, customer-specific requirements and existing infrastructure

limitations or constraints might result in a valid but sub-optimal design choice.

The primary goal of this design is to provide a service that corresponds with the business

objectives of the organization. With financial constraints factored into the decision process, the

key qualities to take into consideration are:

Availability

Recoverability

Performance

Manageability

Security

VMware vSphere Plan and Design Services

Architecture Design

2010 VMware, Inc. All rights reserved.

Page 13 of 79

2. Conceptual Design Overview

DELETE THE FOLLOWING HIGHLIGHTED TEXT AFTER YOU READ IT

At the top of this section, insert text describing the key motivations for this design effort. What are

customer pain points? What are the factors driving business decisions in IT?

Fill in the requirements, assumptions and constraints below with information gathered during your

engagement.

The key customer drivers and requirements guide all design activities throughout an engagement.

Requirements, assumptions, and constraints are carefully logged so that all logical and physical

design elements can be easily traced back to their source and justification.

2.1 Customer Requirements

Requirements are the key demands on the design. Sources include both business and technical

representatives.

Table 3. Customer Requirements

ID Requirement Source Date Approved

r101 Tier 1 services must meet a one hour RTO. Fred Jones

r102 PCI-compliant services require isolation from other

services.

David Johnson

2.2 Design Assumptions

Assumptions are introduced to reduce design complexity and represent design decisions that are

already factored into the environment.

Table 4. Design Assumptions

ID Assumption Source

Date

Approved

a101 All services belonging to the Billing Department can be

considered as Tier 2 for the purposes of DR planning.

James Hamilton

a102 Performance is considered acceptable if the end user

does not notice a difference between the original

platform and the new design.

David Johnson

VMware vSphere Plan and Design Services

Architecture Design

2010 VMware, Inc. All rights reserved.

Page 14 of 79

2.3 Design Constraints

Constraints limit the logical design decisions and physical specifications. They are decisions

made independent of this engagement that may or may not align with stated objectives.

Table 5. Design Constraints

ID Constraint Source

Date

Approved

c101 IBM will provide the server hardware. Fred Jones

c102 Qlogic HBAs will be used in the ESX hosts. Fred Jones

2.4 Use Cases

This design is targeted at the following use cases:

Server consolidation (power and cooling savings, green computing)

Server infrastructure resource optimization (load balancing, high availability)

Rapid provisioning

Server standardization

The following use cases are deferred to a future project:

Server containment (new workloads)

VMware vSphere Plan and Design Services

Architecture Design

2010 VMware, Inc. All rights reserved.

Page 15 of 79

3. vSphere Datacenter Design

3.1 vSphere Datacenter Logical Design

In VMware vSphere, a datacenter is the highest level logical boundary. The datacenter may be

used to delineate separate physical sites/locations or vSphere infrastructures with completely

independent purposes.

Within vSphere datacenters, VMware ESX/ESXi hosts are typically organized into clusters.

Clusters group similar hosts into a logical unit of virtual resources, enabling such technologies as

VMware vMotion, High Availability (HA), Dynamic Resource Scheduling (DRS), and VMware

Fault Tolerance (FT).

To address customer requirements, the following design options were proposed during the design

workshops. For each design decision, the impact on each infrastructure quality is noted. The

selected design option is then explained with the appropriate justification.

DELETE THE FOLLOWING HIGHLIGHTED GUIDANCE TEXT AFTER YOU READ IT AND

REMOVE THE HIGHLIGHTING FROM THE DESIGN DECISION TEMPLATE.

The following design decision is an example. Follow the model below to communicate the design

decisions appropriate to your customer and their requirements.

3.1.1 Tier 2 Service Availability

Customer requirements have not explicitly defined the service level availability for Tier 2 services.

The following two options are available.

3.1.1.1. Option 1: Continuous Availability

Continuously available services:

Are redundant at the application level

Have no single points of failure

The drawbacks of continuous availability are:

More expensive infrastructure

Not-all applications are compatible with continuous availability methods

3.1.1.2. Option 2: High Availability

Highly available services:

Limit single points of failure

Are less expensive to support

The drawbacks of high availability are:

Some service downtime is possible

Application awareness requires additional effort

Tier 2 services are not defined as mission critical, but they are still important to daily business

operations of the customer. Providing no service availability efforts is not an acceptable option.

Budgetary constraints must be balanced with availability requirements so that if a problem occurs,

the service is restored within one hour, as stated in the service level agreement for Tier 2

services (r007).

VMware vSphere Plan and Design Services

Architecture Design

2010 VMware, Inc. All rights reserved.

Page 16 of 79

Table 6. Continuous Availability or High Availability

Design Quality Option 1 Option 2 Comments

Availability Both options improve availability, though Option 1

would guarantee a higher level.

Manageability o Option 1 would be harder to maintain due to increased

complexity.

Performance o o Both design options have no impact on performance

Recoverability Both options improve recoverability

Security o o Both design options have no impact on security

Legend: = positive impact on quality; = negative impact on quality; o = no impact on quality

Due to the lower cost and better manageability, customer has selected Option 2, High Availability.

This design decision is reflected in the physical design specifications outlined below.

3.2 vSphere Clusters

As part of this logical design, vSphere clusters are created to aggregate hosts. The number of

hosts per cluster is shown in the following table.

Table 7. Total Number of Hosts and Clusters Required

Attribute Specification

Number of hosts required to support 1,000 VMs 17

Approximate number of VMs per host 58.82

Maximum vSphere cluster size if hosts support more than 40 VMs each 16 hosts

Capacity for host failures per cluster 1 host

Dedicated hosts for maintenance capacity per cluster 1 host

Number of usable hosts per cluster 6 hosts

Number of clusters created 3

Total usable capacity in hosts 18 hosts

Total usable capacity in VMs

(total usable hosts * true consolidation ratio)

1,084

VMware vSphere Plan and Design Services

Architecture Design

2010 VMware, Inc. All rights reserved.

Page 17 of 79

Assumptions and Caveats

The total usable capacity in VMs does not account for shadow instances of VMs needed to

support VMware FT. The number of protected VMs must be counted double.

Host failures for VMware HA are expressed in the number of allowable host failures, meaning

that the expected load should be able to run on surviving hosts. HA policies can also be

applied on a percentage spare capacity basis.

Dedicated hosts for maintenance assumes that a host is reserved strictly to offload running

VMs from other hosts that must undergo maintenance. When not being used for

maintenance, such hosts can also provide additional spare capacity to support a second host

failure, or for unusually high demands on resources. Having dedicated maintenance hosts

can be considered somewhat conservative, as spare capacity is being allocated strictly for

maintenance activities. Such spare capacity is earmarked here to ensure that there is

sufficient capacity to run VMs with minimal disruption.

Hosts are evenly divided across the clusters.

Clusters can be created and organized to enforce resource allocation policies such as:

o Load balancing

o Power management

o Affinity rules

o Protected workloads

o Limited number of licenses available for specific applications

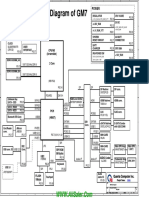

Figure 1. Datacenter Logical Design

Datacenter A Datacenter B

None

Tier One Cluster

Host & VM Affinity Rules

Fault Tolerance

vCenter Server VM

(with VUM, Converter plug-ins)

Tier Two

Cluster

Distributed Power Management

Tier Three Cluster

Application Cluster for limited

licenses

Hosts 1-8

Hosts 9-16

Hosts 17-24

MS SQL Server DB

(vCenter DB, VUM DB)

Active

Directory

VMware vSphere Plan and Design Services

Architecture Design

2010 VMware, Inc. All rights reserved.

Page 18 of 79

3.2.1 VMware HA

Each cluster are configured for VMware High Availability (HA) to automatically recover VMs if

either ESX/ESXi host fails, or if there is an individual VM failure. A host is declared failed if the

other hosts in the cluster cannot communicate with it. A VM is declared failed if a heartbeat inside

the guest OS can no longer be received.

VMs are tiered in relative order of priority for restarts:

High (for example, Windows Active Directory domain controller VMs).

Medium (default).

Low.

Disabled. For non-critical VMs. Do not restart. Sacrifices resources for higher priority VMs (for

example, QA and test VMs).

The configuration settings for VMware HA are shown in the following table.

Table 8. VMware HA Cluster Configuration

Attribute Specification

Enable host monitoring Enable

Admission control Prevent VMs from being powered on if they violate availability

constraints

Admission control policy Cluster tolerates one host failure

Default VM restart priority High (critical VMs)

Medium (majority of VMs)

Disabled (non-critical VMs)

Host isolation response Power off VM

Enable VM monitoring Enable

VM monitoring sensitivity Medium

VMware vSphere Plan and Design Services

Architecture Design

2010 VMware, Inc. All rights reserved.

Page 19 of 79

Setting Explanations

Enable host monitoring. When HA is enabled, hosts in the cluster are monitored. If there is

a host failure, the virtual machines on a failed host are restarted on alternate running hosts in

the cluster.

Admission control. Enforces availability constraints and preserves host failover capacity.

Any operation on a virtual machine that decreases the unreserved resources in the cluster

and violates availability constraints is not permitted.

Admission control policy. Each HA cluster can support as many host failures as specified.

Default VM restart priority. The priority level specified here is relative. VMs must be

assigned a relative restart priority level for HA. VMs are organized into four categories: high,

medium, low, and disabled. It is presumed that the majority of systems will be satisfied by the

medium setting and are therefore left at default. VMs identified as high priority, such as the

Active Directory VMs, are started before the medium priority VMs, which in turn are restarted

before the VMs configured with low priority. If insufficient cluster resources are available, it is

possible that VMs configured with low priority will not be restarted. To help prevent this

situation, non-critical systems, such as QA and test VMs, are set to disabled. If there is a host

failure, these VMs are not restarted, saving critical cluster resources for higher priority VMs.

Host isolation response. Host isolation response determines what happens when a host in

a VMware HA cluster loses its service console/management network connection, but

continues running. A host is deemed isolated when it stops receiving heartbeats from all

other hosts in the cluster and it is unable to ping its isolation addresses. When this occurs,

the host executes its isolation response. To prevent the potential for multiple instances of

each virtual machine to be running if a host becomes isolated from the network (causing

other hosts to believe it has failed and automatically restart the hosts VMs), the VMs are

automatically powered off upon host isolation.

Enable VM monitoring. In addition to determining if a host has failed, HA can also monitor

for virtual machine failure. When set to enabled, the VM monitoring service (using VMware

Tools) evaluates whether each virtual machine in the cluster is running by checking for

regular heartbeats from the VMware Tools process running in each guest OS. If no

heartbeats are received, HA assumes that the guest operating system has failed, and HA

reboots the VM.

VM monitoring sensitivity. This affects how relatively quickly HA concludes that a VM

failed. Highly sensitive monitoring results in a more rapid conclusion that a failure occurred.

While unlikely, highly sensitive monitoring may lead to false identification of failures when the

virtual machine in question is actually still working, but heartbeats have not been received

due to factors such as resource constraints or network issues. Low sensitivity monitoring

allows for more time before HA deems a VM to have failed. At the medium setting, HA

restarts the VM if the heartbeat between the host and the VM was not received within a 60

second interval. HA also only restarts the VM after each of the first three failures every 24

hours to prevent repeated failed restarting of VMs that need intervention to recover.

VMware vSphere Plan and Design Services

Architecture Design

2010 VMware, Inc. All rights reserved.

Page 20 of 79

3.3 Microsoft Cluster Service in an HA/DRS Environment

Microsoft Cluster Service (MSCS) applies to Microsoft Cluster Service with Windows Server 2003

and Failover Clustering with Windows Server 2008.

All hosts that run MSCS virtual machines are managed by a VMware vCenter Server system

with VMware HA and DRS enabled. For a cluster of virtual machines on one physical host, affinity

rules are used. For a cluster of virtual machines across physical hosts, anti-affinity rules are used.

The advanced option for VMware DRS, ForceAffinePoweron, is set to 1, which enables strict

enforcement of the affinity and anti-affinity rules that are created. The automation level of all

virtual machines in an MSCS cluster are set to Partially Automated.

Note Migration of MSCS clustered virtual machines is not recommended.

3.4 VMware FT

Each cluster also supports VMware Fault Tolerance (FT) to protect select critical VMs. The

systems to protect are:

2 Blackberry Enterprise servers

2 Microsoft Exchange front-end servers

2 Reporting servers

These six systems are distributed evenly amongst the three clusters resulting in two FT-protected

virtual machines per eight hosts initially.

All VMs to be protected by VMware FT have only one vCPU and disks configured eager-zeroed,

also called thick-provisioned (not thin-provisioned). An eager-zeroed thick disk has all space

allocated and zeroed out at creation time; this takes a bit longer for the creation time, but

facilitates optimal performance and better security.

VMware Fault Tolerance with VMware Distributed Resource Scheduler (DRS) are enabled. This

process allows fault tolerant virtual machines to benefit from better initial placement and to be

included in the cluster's load balancing calculations.

Note Enable the Enhanced vMotion Compatibility (EVC) feature.

On-Demand Fault Tolerance is scheduled for the two reporting servers during the quarter-end

report period and then returned to the HA cluster during non-critical operations.

FT traffic is supported with a pair of Gigabit Ethernet ports (see Section 5, vSphere Network

Architecture). Because a pair of Gigabit Ethernet ports can support on average 4 to 5 FT-

protected VMs per host, there is capacity for additional VMs to be protected by VMware FT.

VMware vSphere Plan and Design Services

Architecture Design

2010 VMware, Inc. All rights reserved.

Page 21 of 79

4. VMware ESX/ESXi Host Design

4.1 Compute Layer Logical Design

The compute layer of the architecture design encompasses the CPU, memory, and hypervisor

technology components. Logical design at this level centers on performance and security.

To address customer requirements, the following design options were proposed during the design

workshops. For each design decision, the impact on each infrastructure quality is noted. The

selected design option is then explained with the appropriate justification.

DELETE THE FOLLOWING HIGHLIGHTED GUIDANCE TEXT AFTER YOU READ IT AND

REMOVE THE HIGHLIGHTING FROM THE DESIGN DECISION TEMPLATE.

The following Design Decision is an example. Please follow the model below to communicate the

design decisions appropriate to your customer and their requirements. See Section 3.1 for an

example.

4.1.1 Design Decision 1

Description of the design decision

4.1.1.1. Option 1: Name

Advantages:

Advantage 1

Advantage 2

Drawbacks:

Drawback 1

Drawback 2

4.1.1.2. Option 2: Name

Advantages:

Advantage 1

Advantage 2

Drawbacks:

Drawback 1

Drawback 2

Further details should be included here. Also highlight any relevant requirements, assumptions

and/or constraints that will impact this decision.

Table 9. Option 1 Name or Option 2 Name

Design Quality Option 1 Option 2 Comments

Availability Both options improve availability, though Option

1 would guarantee a higher level.

Manageability o Option 1 would be harder to maintain due to

increased complexity.

VMware vSphere Plan and Design Services

Architecture Design

2010 VMware, Inc. All rights reserved.

Page 22 of 79

Performance o o Both design options have no impact on

performance

Recoverability Both options improve recoverability

Security o o Both design options have no impact on security

Legend: = positive impact on quality; = negative impact on quality; o = no impact on quality

Which option was selected and why?

4.2 Host Platform

This section details the VMware ESX/ESXi hosts proposed for the vSphere infrastructure design.

The logical components specified are required by the vSphere architecture to meet calculated

consolidation ratios, protect against failure through component redundancy, and support all

necessary vSphere features.

Table 10. VMware ESX/ESXi Specifications

Attribute Specification

Host type and version ESXi 4.1 Installable

Number of CPUs

Number of cores

Total number of cores

Processor speed

4

4

16

2.4 GHz (2400 MHz)

Memory 32GB

Number of NIC ports 10

Number of HBA ports 4

VMware ESXi was selected over VMware ESX because of its smaller running footprint, reduced

management complexity, and significantly fewer number of anticipated software patches.

The exact ESXi installable build version to be deployed will be selected closer to implementation

and will be chosen based on the available stable and supported released versions at that time.

VMware vSphere Plan and Design Services

Architecture Design

2010 VMware, Inc. All rights reserved.

Page 23 of 79

4.3 Host Physical Design Specifications

This section details the physical design specifications of the host and attachments corresponding

to the previous section that describes the logical design specifications.

Table 11. VMware ESX/ESXi Host Hardware Physical Design Specifications

Attribute Specification

Vendor and model x64 vendor and model

Processor type

Total number of cores

Quad core x64-vendor CPU

16

Onboard NIC vendor and model

Onboard NIC ports x speed

Number of attached NICs

NIC vendor and model

Number of ports/NIC x speed

Total number of NIC ports

NIC vendor and model

2 x Gigabit Ethernet

4 (excluding onboard)

NIC vendor and model

2 x Gigabit Ethernet

10

Storage HBA vendor and model

Storage HBA type

Number of HBAs

Number of ports/HBA x speed

Total number of HBA ports

HBA vendor and model

Fibre Channel

2/4GB

2 x 4GB

4

Number and type of local drives

RAID level

Total storage

2 x Serial Attached SCSI (SAS)

RAID 1 (Mirror)

72GB

System monitoring IPMI-based BMC

The configuration and assembly process for each system is standardized, with all components

installed the same on each host. Standardizing not only the model, but also the physical

configuration of the ESX/ESXi hosts, is critical to providing a manageable and supportable

infrastructureit eliminates variability. Consistent PCI card slot location, especially for network

controllers, is essential for accurate alignment of physical to virtual I/O resources. Appendix B

contains further information on the host PCI placement.

All ESX/ESXi host hardware including CPUs was selected following the vSphere Hardware

Compatibility Lists and the CPUs were determined to be compatible with Fault Tolerance.

VMware vSphere Plan and Design Services

Architecture Design

2010 VMware, Inc. All rights reserved.

Page 24 of 79

5. vSphere Network Architecture

5.1 Network Layer Logical Design

The network layer encompasses all network communications between virtual machines, vSphere

management layer, and the physical network. Key infrastructure qualities often associated with

networking include availability, security, and performance.

To address customer requirements, the following design options were proposed during the design

workshops. For each design decision, the impact on each infrastructure quality is noted. The

selected design option is then explained with the appropriate justification.

DELETE THE FOLLOWING HIGHLIGHTED GUIDANCE TEXT AFTER YOU READ IT AND

REMOVE THE HIGHLIGHTING FROM THE DESIGN DECISION TEMPLATE.

The following Design Decision is an example. Please follow the model below to communicate the

design decisions appropriate to your customer and their requirements. See Section 3.1 for an

example.

5.1.1 Design Decision 1

Description of the design decision

5.1.1.1. Option 1: Name

Advantages:

Advantage 1

Advantage 2

Drawbacks:

Drawback 1

Drawback 2

5.1.1.2. Option 2: Name

Advantages:

Advantage 1

Advantage 2

Drawbacks:

Drawback 1

Drawback 2

Further details should be included here. Also highlight any relevant requirements, assumptions

and/or constraints that will impact this decision.

Table 12. Option 1 Name or Option 2 Name

Design Quality Option 1 Option 2 Comments

Availability Both options improve availability, though Option

1 would guarantee a higher level.

VMware vSphere Plan and Design Services

Architecture Design

2010 VMware, Inc. All rights reserved.

Page 25 of 79

Manageability o Option 1 would be harder to maintain due to

increased complexity.

Performance o o Both design options have no impact on

performance

Recoverability Both options improve recoverability

Security o o Both design options have no impact on security

Legend: = positive impact on quality; = negative impact on quality; o = no impact on quality

Which option was selected and why?

5.2 Network vSwitch Design

Following best practices, the network architecture complies with these design decisions:

Separate networks for vSphere management, VM connectivity, vMotion traffic, and Fault

Tolerance logging (VM record/replay) traffic

Separate network for NFS or iSCSI (storage over IP) used to store VM templates and guest

OS installation ISO files

Network I/O control implemented to allow flexible partitioning of network traffic for virtual

machine, vMotion, FT, and IP storage traffic across the physical NIC bandwidth

Redundant virtual Distributed Switches (vDS) with at least three active physical adapter ports

Redundancy across different physical adapters to protect against NIC or PCI slot failure

Redundancy at the physical switch level

Table 13. Proposed Virtual Switches Per Host

Virtual Standard (vSS) or

Virtual Distributed Switch (vDS)

Function Number of

Physical NIC Ports

vSS0 Management Console and

vMotion

2

vDS1 VM, Storage over IP and FT 6

VMware vSphere Plan and Design Services

Architecture Design

2010 VMware, Inc. All rights reserved.

Page 26 of 79

vSS 0 is dedicated to the management network and vMotion. Service consoles and VMkernel

ports (used for vMotion and IP storage) do not migrate from host to host; these can remain on a

Virtual Standard Switch (vSS.)

vDS1, is allocated to virtual machine, IP Storage, and Fault Tolerance network traffic. This should

be configured to a Disturbed Switch to take advantage of network I/O, Load Based Teaming, and

Network vMotion. This vDS is configured to use six active Ethernet adapters. All physical network

switch ports connected to these adapters are configured as trunk ports with spanning tree

disabled. The trunk ports are configured to pass traffic for all VLANs used by the virtual switch.

The physical NIC ports are connected to redundant physical switches.

No traffic shaping policies are in place, Load-based teaming is configured for improved network

traffic distribution between the pNICs and Network I/O Control enabled.

VM network connectivity uses virtual switch port groups and 802.1q VLAN tagging to segment

traffic into four VLANs

To support the network demands of up to 60 VMs per host, this vDS is configured to use six

active Gigabit Ethernet adapters. All physical network switch ports connected to these adapters

are configured as trunk ports with spanning tree disabled. The trunk ports are configured to pass

traffic for all VLANs used by the virtual switch.

The physical NIC ports are connected to redundant physical switches.

VMware vSphere Plan and Design Services

Architecture Design

2010 VMware, Inc. All rights reserved.

Page 27 of 79

Figure 2. Network Switch Design

ESX/ESXi Host

Virtual Standard

Switch0

Virtual Distributed

Switch1

vmnic7

Onboard

vmnic5

Slot 7

vmnic6

Onboard

vmnic4

Slot 7

vmnic2

Slot 7

Standby

vmnic0

Onboard

Active

vmnic1

Onboard

vmnic3

Slot 7

Physical

Switch1

VMkernel:

vMotion

Port Group

Management

Console

Port Group

Physical

Switch2

Virtual Machine

Network

Port Group

VMkernel:

Storage over IP

Port Group

VMkernel:

Fault Tolerance

Port Group

VLAN 100

VLAN 500

VLAN 200

VLAN 300

VLAN 600

VLAN 650

VMware vSphere Plan and Design Services

Architecture Design

2010 VMware, Inc. All rights reserved.

Page 28 of 79

Table 14. vDS Configuration Settings

Parameter Setting

Load balancing Route based on physical NIC load

Failover detection Beacon probing

Notify switches Enabled

Rolling failover No

Failover order All active

vSwitch Configuration Setting Explanations

Load Balancing. Route based on physical NIC ensures vDS dvUplink capacity is optimized.

Load-Based Teaming (LBT) avoids the situation of other teaming policies in which some of

the dvUplinks in a DV Port Groups team were idle while others were completely saturated

just because the teaming policy used is statically determined. LBT reshuffles port binding

dynamically based on load and dvUplinks usage to make an efficient use of the available

bandwidth. LBT only moves ports to dvUplinks configured for the corresponding DV Port

Groups team. Note that LBT does not use shares or limits to make its determination while

rebinding ports from one dvUplink to another.

Failover Detection. In addition to link status, the VMkernel sends out and listens for periodic

beacon probes on all network adapters in the team. This enhances link status, which relies

exclusively on link integrity of the physical network adapter to determine when a failure

occurs. Link status enhanced by beacon probing detects failures that are due to cable

disconnects or physical switch power failures, as well as configuration errors or network

interruptions beyond the local NIC termination point.

Notify Switches. When enabled, this option sends out a gratuitous ARP whenever a new

NIC is added to the team or when a virtual NIC begins using a different physical uplink on the

ESX/ESXi host. This option helps to lower latency issues when a failover occurs or when

virtual machines are migrated to another host using vMotion.

Rolling Failover. Determines how a physical adapter is returned to active duty after

recovering from a failure. When set to No, the adapter is returned to active duty immediately

upon recovery. Setting it to Yes keeps the adapter inactive, even if it is recovered and

requires manual intervention to return it to service.

Failover Order. All physical adapters assigned to each vSwitch and port group are

configured as Active adapters. No adapters are configured as standby or unused.

VMware vSphere Plan and Design Services

Architecture Design

2010 VMware, Inc. All rights reserved.

Page 29 of 79

Table 15. vDS Security Settings

Parameter Setting

Promiscuous mode Reject (default)

MAC address changes Reject

Forged transmits Reject

vDS Security Setting Explanations

Promiscuous Mode. Setting to Reject at the vSwitch level protects against virtual machine

virtual network adapters. Placing a VM virtual network adapter in promiscuous mode has no

effect on which frames are received by the adapter.

MAC Address Changes. Setting to Reject at the vSwitch level protects against MAC

address spoofing. If the guest OS changes the MAC address of the adapter to anything other

than what is in the .vmx configuration file, all inbound frames are dropped if the guest OS

changes the MAC address back to match the MAC address in the .vmx configuration file,

inbound frames are sent again.

Forged Transmits. Setting to Reject at the vSwitch level protects against MAC address

spoofing. Outbound frames with a source MAC address that is different from the one set on

the adapter are dropped.

5.3 Network Physical Design Specifications

This section expands on the logical network design in the corresponding previous section by

providing details on the physical NIC layout and physical network attributes.

Table 16. vSwitches by Physical/Virtual NIC, Port and Function

vSwitch vmnic NIC / Slot Port Function

0 0 Onboard

N/A

0 Management Console and vMotion

0 2 1 Management Console and vMotion

1 1 Onboard

Quad PCIe Slot 7

0 VM, FT, and Storage over IP traffic

1 3 1 VM, FT, and Storage over IP traffic

1 5 Quad PCIe Slot 7

Onboard

0 VM, FT, and Storage over IP traffic

1 7 1 VM, FT, and Storage over IP traffic

1 4 Quad PCIe Slot 7

Onboard

0 VM, FT, and Storage over IP traffic

1 6 1 VM, FT, and Storage over IP traffic

VMware vSphere Plan and Design Services

Architecture Design

2010 VMware, Inc. All rights reserved.

Page 30 of 79

Table 17. Virtual Switch Port Groups and VLANs

vSwitch Port Group Name VLAN ID

0 MGMT-100 100

0 VMOTION-500 500

1 PROD-200 200

0 DEV-300 300

0 FT-600 600

0 NFS-650 650

0 N/A 1

See Appendix D for more information on the physical network design specifications.

5.4 Network I/O Control

Virtual Distributed Swtich1 (vDS1) are configured with Network I/O Control enabled. After

Network I/O control is enabled, traffic through that virtual distributed switch is divided into the

following network resource pools: FT traffic, iSCSI traffic, vMotion traffic, management traffic,

NFS traffic and virtual machine traffic. This design specifies that virtual machine, iSCSI, and FT

network traffic are dedicated to virtual distributed swtich1.

The priority of the traffic from each of these network resource pools is set by the physical adapter

shares and host limits for each network resource pool. Virtual machine traffic is set to High, the

FT resource pool set to Normal, and the iSCSI traffic set to Low. These reservations apply only

when the physical adapter is saturated.

Note The iSCSI traffic resource pool shares do not apply to iSCSI traffic on a dependent

hardware iSCSI adapter.

Table 18. Virtual Switch Port Groups and VLANs

Network Resource Pool Physical Adapter Shares Host Limit

Fault Tolerance Normal Unlimited

iSCSI Low Unlimited

Management N/A N/A

NFS N/A N/A

Virtual Machine High Unlimited

vMotion N/A N/A

VMware vSphere Plan and Design Services

Architecture Design

2010 VMware, Inc. All rights reserved.

Page 31 of 79

Network I/O Settings Explanation

Host Limits. Host limits are the upper limit of bandwidth that the network resource pool can

use.

Physical Adapter Shares. Shares assigned to a network resource pool determine the total

available bandwidth guaranteed to the traffic associated with that network resource pool.

o High. Sets the shares for this resource pool to 100.

o Normal. Sets the shares for this resource pool to 50.

o Low. Sets the shares for this resource pool to 25.

o Custom. A specific number of shares, from 1 to 100, for this network resource pool.

VMware vSphere Plan and Design Services

Architecture Design

2010 VMware, Inc. All rights reserved.

Page 32 of 79

6. vSphere Shared Storage Architecture

6.1 Storage Layer Logical Design

To address customer requirements, the following design options were proposed during the design

workshops. For each design decision, the impact on each infrastructure quality is noted. The

selected design option is then explained with the appropriate justification.

DELETE THE FOLLOWING HIGHLIGHTED GUIDANCE TEXT AFTER YOU READ IT AND

REMOVE THE HIGHLIGHTING FROM THE DESIGN DECISION TEMPLATE.

The following Design Decision is an example. Please follow the model below to communicate the

design decisions appropriate to your customer and their requirements. See Section 3.1 for an

example.

6.1.1 Design Decision 1

Description of the design decision

6.1.1.1. Option 1: Name

Advantages:

Advantage 1

Advantage 2

Drawbacks:

Drawback 1

Drawback 2

6.1.1.2. Option 2: Name

Advantages:

Advantage 1

Advantage 2

Drawbacks:

Drawback 1

Drawback 2

Further details should be included here. Also highlight any relevant requirements, assumptions

and/or constraints that will impact this decision.

Table 19. Option 1 Name or Option 2 Name

Design Quality Option 1 Option 2 Comments

Availability Both options improve availability, though Option

1 would guarantee a higher level.

Manageability o Option 1 would be harder to maintain due to

increased complexity.

VMware vSphere Plan and Design Services

Architecture Design

2010 VMware, Inc. All rights reserved.

Page 33 of 79

Performance o o Both design options have no impact on

performance

Recoverability Both options improve recoverability

Security o o Both design options have no impact on security

Legend: = positive impact on quality; = negative impact on quality; o = no impact on quality

Which option was selected and why?

6.2 Shared Storage Platform

This section details the shared storage proposed for the vSphere infrastructure design.

Table 20. Shared Storage Logical Design Specifications

Attribute Specification

Storage type Fibre Channel SAN

Number of storage processors 2 (redundant)

Number of switches

Number of ports per host per switch

2 (redundant)

2

LUN size 1TB

Total LUNs 50

VMFS datastores per LUN 1

VMFS version 3.33

VMware vSphere Plan and Design Services

Architecture Design

2010 VMware, Inc. All rights reserved.

Page 34 of 79

6.3 Shared Storage Design

The following figure illustrates the design.

Figure 3. SAN Diagram

ESX/ESXi Host

vmhba0 vmhba1

Fibre Switch A Fibre Switch B

SAN Storage Processor A SAN Storage Processor B

1TB

LUN

VMFS

1

VMFS

2

VMFS

3

VMFS

...

VMFS

...

VMFS

...

VMFS

...

VMFS

50

1TB

LUN

1TB

LUN

1TB

LUN

1TB

LUN

1TB

LUN

1TB

LUN

1TB

LUN

vmhba2 vmhba3

Based on the results of the physical system assessment, it was determined that on average, each

system has a 36GB system volume with 9 gigabytes used, and a 143GB data volume with 22

gigabytes used. After projecting for volume growth and providing 33% minimum free space per

volume, for the task of estimating overall storage requirements it was determined that each virtual

machine would be configured with a 12GB system volume and a 40GB data volume. Unless

constrained by specific application or workload requirements, or special circumstances (such as

being protected by VMware Fault Tolerance), all data volumes are provisioned as thin disks with

the system volumes deployed as thick. This strategic over-provisioning saves an estimated

8.25TB of storage, assuming that on average, 50% of the currently available storage on each

data volume remains unused. The consumption of each storage volume is monitored in

production with alarms configured to alert if any approach capacity to provide sufficient time to

source and provision additional disk.

VMware vSphere Plan and Design Services

Architecture Design

2010 VMware, Inc. All rights reserved.

Page 35 of 79

With the intent to maintain at least 15% free capacity on each VMFS volume for VM swap files,

snapshots, logs, and thin volume growth, it was determined that 48.7TB of available storage is

required to support 1,000 virtual machines after accounting for the long term benefit of thin

provisioning. This will be provided in 50 1TB LUNs (the additional 1.23 TB is for growth and test

capacity). These LUNs will be zoned to all hosts and formatted as 50 VMFS datastores.

1TB was selected because it provides the best balance between performance and manageability

with approximately 20 VMs and 40 virtual disks per volume. Although larger LUNs up to 2TB

(without extents) and 64TB (with extents) are possible, this size was chosen for several reasons.

For manageability, it allows an adequately large portion of disks to better use resources and limit

storage sprawl. A smaller size maintains a reasonable RTO and reduces the risks associated with

losing a single LUN. In addition, the size limits the number of VMs that remain on a single LUN.

Additionally, three NFS volumes are presented to each ESX/ESXi host for the storage of virtual

machine templates, guest operating system installation, CD images (ISOs), and to provide

administrators second-tier storage for log and VM archival and infrastructure testing. The

separation of such files from VM files was done recognizing that these non-VM files can often

have higher I/O characteristics.

Each ESX/ESXi host is to be provisioned with a vSS switch supported by two physical Gigabit

Ethernet adapters dedicated for IP storage connectivity.

VMware vSphere Plan and Design Services

Architecture Design

2010 VMware, Inc. All rights reserved.

Page 36 of 79

6.4 Shared Storage Physical Design Specifications

This section details the physical design specifications of the shared storage corresponding to the

previous section that describes the logical design specifications.

Table 21. Shared Storage Physical Design Specifications

Attribute Specification

Vendor and model Storage vendor and model.

Type Active/passive.

ESX/ESXi host multipathing policy Most Recently Used (MRU). Set because the

SAN is an active/passive array to avoid path

thrashing. With MRU, a single path to the SAN is

used until it becomes inactive, at which point it

switches to another path and continues to use

this new path until it fails; the preferred path

setting is disregarded.

Minimum/Maximum speed rating of switch

ports

2GB/4GB.

See Appendix E for an inventory of VMFS and NFS volumes.

6.5 Storage I/O Control

Storage I/O Control is enabled. This allows cluster-wide storage I/O prioritization, providing the

ability to control the amount of storage I/O that is allocated to virtual machines during periods of

I/O congestion. The shares are set per virtual machine and can be adjusted for each VM based

on need.

Table 22. Storage I/O Enabled

Datastore Path Storage I/O Enabled

Prod_san01_02 vmhba1:0:0:3 /dev/sda3 48f85575-5ec4c587-

b856-001a6465c102

yes

Prod_san01_07 vmhba2:0:4:1 /dev/sdc1 48fbd8e5-c04f6d90-

1edb-001cc46b7a18

yes

Prod_san01_37 vmhba32:0:1:1 /dev/sde1 48fe2807-7172dad8-

f88b-0013725ddc92

yes

Prod_san01_44 vmhba32:0:0:1 /dev/sdd1 48fe2a3d-52c8d458-

e60e-001cc46b7a18

yes

VMware vSphere Plan and Design Services

Architecture Design

2010 VMware, Inc. All rights reserved.

Page 37 of 79

Table 23. Disk Shares and Limits

VM Disk Shares Limit IOPS

ds007 Disk 1 Low 500

kf002 Disk 1 and 2 Normal 1000

jf001 Disk 1 High 2000

rs003 Disk 1 and 2 Custom 350

Shared I/O Control Settings Explanation

Storage I/O Enabled. Storage I/O is enabled per datastore. Navigate to the Configuration

Tab > Properties to verify that the feature was enabled.

Storage I/O Shares. Storage I/O shares are similar to VMware CPU and memory shares.

Shares define the hierarchy of the virtual machines for distribution of storage I/O resources

during periods of I/O congestion. Virtual machines with higher shares have higher throughput

and lower latency.

Limit IOPs. By default IOPS allowed for a virtual machine are unlimited. By allocating

storage I/O resources, you then limit the IOPS allowed to a virtual machine. If a machine has

multiple disks, you must set the same IOPS value for all the disks that access that virtual

machine.

VMware vSphere Plan and Design Services

Architecture Design

2010 VMware, Inc. All rights reserved.

Page 38 of 79

7. VMware vCenter Server System Design

7.1 Management Layer Logical Design

The Management Layer

To address customer requirements, the following design options were proposed during the design

workshops. For each design decision the impact on each infrastructure quality is noted. The

selected design option is then explained with the appropriate justification.

DELETE THE FOLLOWING HIGHLIGHTED GUIDANCE TEXT AFTER YOU READ IT AND

REMOVE THE HIGHLIGHTING FROM THE DESIGN DECISION TEMPLATE.

The following Design Decision is an example. Please follow the model below to communicate the

design decisions appropriate to your customer and their requirements. See Section 3.1 for an

example.

7.1.1 Design Decision 1

Description of the design decision

7.1.1.1. Option 1: Name

Advantages:

Advantage 1

Advantage 2

Drawbacks:

Drawback 1

Drawback 2

7.1.1.2. Option 2: Name

Advantages:

Advantage 1

Advantage 2

Drawbacks:

Drawback 1

Drawback 2

Further details should be included here. Also highlight any relevant requirements, assumptions

and/or constraints that will impact this decision.

Table 24. Option 1 Name or Option 2 Name

Design Quality Option 1 Option 2 Comments

Availability Both options improve availability, though Option

1 would guarantee a higher level.

Manageability o Option 1 would be harder to maintain due to

increased complexity.

VMware vSphere Plan and Design Services

Architecture Design

2010 VMware, Inc. All rights reserved.

Page 39 of 79

Performance o o Both design options have no impact on

performance

Recoverability Both options improve recoverability

Security o o Both design options have no impact on security

Legend: = positive impact on quality; = negative impact on quality; o = no impact on quality

Which option was selected and why?

7.2 vCenter Server Platform

This section details the VMware vCenter Server proposed for the vSphere infrastructure design.

Table 25. vCenter Server Logical Design Specifications

Attribute Specification

vCenter Server version 4.1

Physical or virtual system Virtual

Number of CPUs

Processor type

Processor speed

2

VMware vCPU

N/A

Memory 4GB

Number of NIC and ports 1/1

Number of disks and disk sizes 2: 12GB (C) and 40GB (D)

Operating system and SP level Windows Server 2003 Enterprise R2 32-bit SP1

VMware vCenter Server, the heart of the vSphere infrastructure, is implemented on a virtual

machine, as opposed to a standalone physical server. Virtualizing vCenter Server enables it to

benefit from advanced features of vSphere, including VMware HA and vMotion.

The exact vCenter Server build to be deployed will be selected closer to implementation and will

be chosen based on the available stable and supported released versions at that time.

VMware vSphere Plan and Design Services

Architecture Design

2010 VMware, Inc. All rights reserved.

Page 40 of 79

7.3 vCenter Server Physical Design Specifications

This section details the physical design specifications of the vCenter Server.

Table 26. vCenter Server System Hardware Physical Design Specifications

Attribute Specification

Vendor and model VMware VM virtual hardware 7

Processor type VMware vCPU

NIC vendor and model

Number of ports/NIC x speed

Network

VMware Enhanced VMXNET

1 x Gigabit Ethernet

Management network

Local disk RAID level N/A

7.4 vCenter Server and Update Manager Databases

This section details the specifications for the vCenter Server and Update Manager databases.

Table 27. vCenter Server and Update Manager Databases Design

Attribute Specification

Vendor and version Microsoft SQL Server 2005

Authentication method SQL Server Authentication

Recovery method Full

Database autogrowth Enabled in 1MB increments

Transaction log autogrowth In 10% increments; restricted to 2GB maximum

size

vCenter statistics level 3

Estimated database size 41.74GB

VMware vSphere Plan and Design Services

Architecture Design

2010 VMware, Inc. All rights reserved.

Page 41 of 79

Setting Explanations

Authentication method. Per the current database security policies, SQL Server

Authentication, and not Windows Authentication, will be used to secure the vCenter Server

databases. A SQL Server account with strong password will be created to support vCenter

Server and vCenter Update Manager access to their respective databases.

Recovery method. The full recovery method helps to ensure that no data is lost if there is

database failure between backups. Because it maintains complete records of all changes to

the database within the transaction logs, it is critical the database is backed up regularly

which truncates (grooms) the logs. The DBA team schedules incremental nightly and full

weekend backups of the vCenter and vCenter Update Manager databases.

Database autogrowth. The vCenter Server database can expand on demand in 1MB

increments with no restriction on its growth.

Transaction log autogrowth. The transaction log is restricted to a maximum 2GBs to

prevent filling up the log volume. Because the vCenter Server and vCenter Update Manager

databases are backed up nightly (truncating the logs), the 2GB maximum should be more

than required.

vCenter statistics level. Level 3 gives more comprehensive vCenter statistics than the

default setting.

Estimated database size. 41.74GB was calculated using the vCenter Advanced Settings

tool, assuming 24 hosts and 1,084 VMs at the above statistics level.

The corporate database, Microsoft SQL Server 2005, will be used, as there is currently a trained

team of DBAs supporting several physical database servers on this platform. For this initial

vSphere infrastructure, this resource is leveraged and databases for both the vCenter Server and

vCenter Update Manager are hosted on a separate, production physical database server system.

Table 28. vCenter Server and Update Manager Database Names

Attribute Specification

vCenter database name VC01DB01

Update Manager database name VUM01DB01

VMware vSphere Plan and Design Services

Architecture Design

2010 VMware, Inc. All rights reserved.

Page 42 of 79